2026-01-26

confessions of an llm addict

pls help, make no mistakes

I doubt that anything resembling genuine "artificial general intelligence" is within reach of current #AI tools. However, I think a weaker, but still quite valuable, type of "artificial general cleverness" is becoming a reality in various ways. - Terrence Tao

I was recently reflecting on my LLM usage. My tool of choice is Google's Gemini model. I love it. I prompt Gemini all the time with all sorts of things. It's funny because the internet has such a visceral hatred for anything 'AI', whilst I feel like sometimes I've found the binary equivalent of the holy grail.

What do I use it for, you may ask?

Here's a short list:

- travel plans

- math questions

- programming questions

- modern history

- how and why BBS board systems worked

- ...

-

what are the ingredients to

(insert photo)this food

Basically, I use it for (almost) everything.

Now why am I writing this blog post? Because I think most people don't know how to use them right.

a note on artificial stupid

I quoted a slice of Terrence Tao's Mastodon post at the start because I think he's got a very illuminating way to think about ai. The crux of it is that when humans are clever, there's a pretty high correlation to them being intelligent. It's obviously not 1:11, but it's high enough that it matters, and when someone does something 'clever', it's not in any way strange to associate them with being intelligent or smart.

We don't have that luxury with ai.

AI does clever things. That does not make it smart. When you use a tool in a smart way, to achieve a "clever" solution to some sort of problem, you are the intelligence behind that. You are the one using the tool. The ai doesn't think that way. In fact, it doesn't think. It just does. And sometimes we see that as being "clever", and some people end up conflating that with intelligence.

So to lay some ground rules; if you really want to get some good use out of ai tools, understand they are not intelligent. They are tools.

llms are making people stoopid

I'm not an expert on ML/AI (yet). I haven't done a single ML class yet (I'm about to, though!). Though I think their use is misunderstood. This is something I am qualified to talk on.

There's enough literature out there talking about how often llms are wrong, or hallucinate, to fill up my national library. They're not wrong. Llms get things wrong, they hallucinate, they say stupid stuff and do nonsensical things. But that's fine.

Think about it this way. You're in class. You just learnt a new topic. You don't quite get it, so you ask your friend right next to you; "hey bro did you understand that?"

They might explain it to you right, they may not. The point being that their hit rate isn't going to be 100%. You're probably not sitting next to the smartest person in the world. So when they give you an answer, you probably won't take it at face value. You'll accept the answer, question it, think about it, maybe even ask the teacher after class.

So when Gemini or GPT-4.1 are wrong 17% of the time, is it really that big of a deal?

You question what they say, and you compare it with other sources. If it's something quantitative, you try to derive it yourself. You do a practice question to see if the model is right. If it's qualitative you can ask someone else. You can even ask another model. Or check wikipedia.

When the model is wrong, you should be able to notice that. Otherwise, you're using it wrong. If it's not something that can easily be checked (like maths), then find another source.

Confirm, confirm, confirm.

This is exactly how I use it.

slingshots

Let's say you accept what I've said above. There's probably a very small proportion of the population that uses llms like myself. I get that.

But what I've also realised is that ai can act like a learning slingshot. I don't know if anyone's used that phrase before in this context, but bear with me as I explain.

Traditionally, your learning is bound by a number of factors. First is your ability to learn; how fast you absorb concepts, how fast you can apply them, how quickly you can understand what things mean. Second is your ability to learn these concepts from somewhere2. Then third, is having someone to bounce those ideas off, to check you're right.

The process probably looks something like: textbook or teacher shows you how to do integration $\rightarrow$ you apply integration to some practice questions $\rightarrow$ if you're wrong you ask teacher why, and learn how to fix, if you're right you get a gold star from said teacher. Now in this process, the teacher is a human. As much as we like to think we do, nobody has infinite patience. A teacher isn't going to give you 4 hours of free tutoring after the school bell has rung. Maybe you can ask again tomorrow. Plus, a teacher isn't always right. They aren't infallible

Now compare this whole process to using your favourite llm. You can get immediate, infinitely patient feedback, at all hours of the day. You can learn concepts faster. You can cover more ground. You can get thousands of different explanations for something. First metaphor wrong? Ask for another one. Want more practice questions? Ask claude. And so on.

The learning process with llms in the middle acts like a slingshot. You're able to reach higher levels of abstraction in a compressed period of time. This is the trick to using llms right.

wisdom

I was listening to a Bloomberg odd lots podcast with the head honcho at AQR talking about markets getting dumber. He talks about the wisdom of the crowd, a concept where collective decisions are better that singular ones. Effectively it means that the more people answer a certain question, the more likely the average answer is correct.

It sounds silly but think about it for a second.

You're with five friends and want to know how tall the empire state building is. Friend 1 says "200 metres", friend 4 suggests "no way, it's more like 500 metres". Friends 2 and 3 both guess somewhere around 350-400. There's going to be some variance around what it actually is. When that happens, the negative and positive variance cancels out. Sum all of those figures up, average it, and you get 362.5 metres. It's really 381 metres tall.

Not all situations work like this, of course, but generally this is the mathematical reason why it works.3

So now apply this concept to your llm of choice. It scrapes the internet, the collective amount of human knowledge that has been digitised, and it generates an 'average' of those inputs, to give you an answer.

It won't be right sometimes, but most of the time it will be close enough.

the old internet

One of the biggest benefits to llms, outside of learning, is cutting through all of the bs on the internet. Have you tried finding a recipe online?

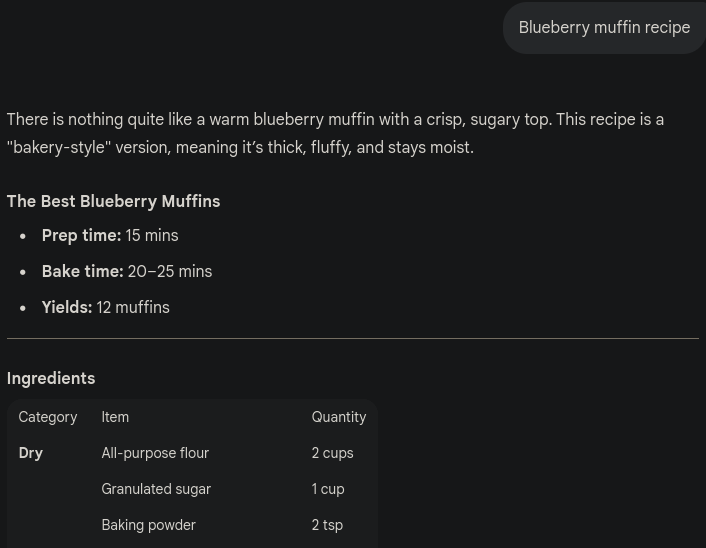

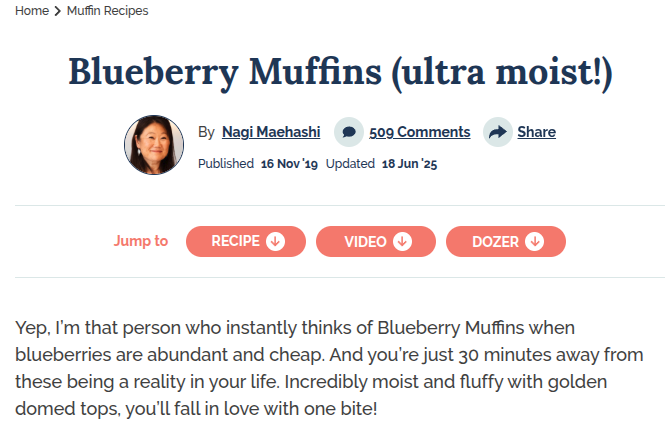

Most times you need to trawl through the blog version of war and peace simply because it SEOs better. Take for instance, finding a blueberry muffin recipe. Compare Gemini's output to the first result on DDG.

What this means is that websites like this are going to eventually die. Traffic will subside, their ad income will reduce, and the blog will eventually shut once the domain renewal cost is more than the revenue generated. I have no problem with this.

Yes, it means that eventually the amount of content online will drop, originality will reduce, and the same thing will be constancy recycled by ai when you prompt it.

I don't care.

Websites did it to themselves with the extreme amount of intrusive ads, tracking and breaking for no good reason (I'm looking at you, websites that refuse to work with Firefox).

If you don't believe me, try using a stock standard Chrome browser session for a day without an ad blocker installed. It's like walking into a hoarders house, with vermin and bugs everywhere. The internet is literally unusable without uBlock Origin.4

back to square one

Going back to where I started this post. The internet is going to change, learning will change. Those who know how to use a blade without cutting themselves will find that a sharp instrument is very useful. Others will lose fingers. Learn how to use AI to your benefit.

Speak with it, converse with it, bounce ideas back and forth, try to learn some new things. Check that it's correct, mark its working. Make that learning feedback loop shorter, and I guarantee you will find it immensely more useful than you realised was ever possible.

- ouzo

- I'm probably the prime exception to the rule. I sometimes do clever things, followed by completely stupid mistakes. ↩

- I mean, maybe you are deriving everything from first principles. Most of us can't, though. ↩

- I guess it's more like the most likely output, rather than strictly the average, but the idea is basically the same. ↩

- If you aren't currently using uBO, please for your own sanity go and install it right now. It's open source and makes browsing the internet so much more bearable. ↩